The Ultimate Guide to Technical SEO for AI Search Engines for 2025

Discover how to optimize your website for AI-driven search engines like GPTBot, Gemini & Perplexity. This in-depth playbook for 2025 covers crawlability, schema, knowledge graphs, rendering and internal linking to boost your AI visibility.

Written by

Traditional SEO

Oct 20, 2025

Get found in AI search

AI search engines don’t just index pages; they extract relationships, entities, and answers from multiple sections of your content.

Schema how AI crawlers confidently connect your content to their knowledge graphs.

Broken links and ambiguous site structure can hide your best answers from AI visibility even if your SEO is perfect elsewhere.

Linking your data to external knowledge bases (think Wikipedia, Wikidata) is how you establish trust with AI.

Multiple-entity mapping and semantic triples give your pages depth, helping AI models cite you for semantically similar queries.

Technical Concepts

AI crawls your website more frequently than you’d imagine. And every time an AI engine crawls your site, whether it’s ChatGPT, Gemini, or Perplexity, it isn’t asking “What’s this page about?” It’s asking, “Can I trust this enough to repeat it?”

That single question is redefining how websites are built.

Because in this new era, your technical SEO isn’t just about being discoverable. It’s about being interpretable. Your metadata, schema, and internal links form the foundation that tells AI who you are, what you know, and why your information matters.

This blog will help you build that foundation. You’ll learn how to make your website readable to AI, how to structure your data for context and accuracy, and how to turn your site into a trusted source that AI consistently references in its answers.

Crawlability and Indexing

AI bots for search engines like ChatGPT, Perplexity, or Grok don’t scan web pages like traditional search engines, instead, they read, understand and build connections of your website’s content.

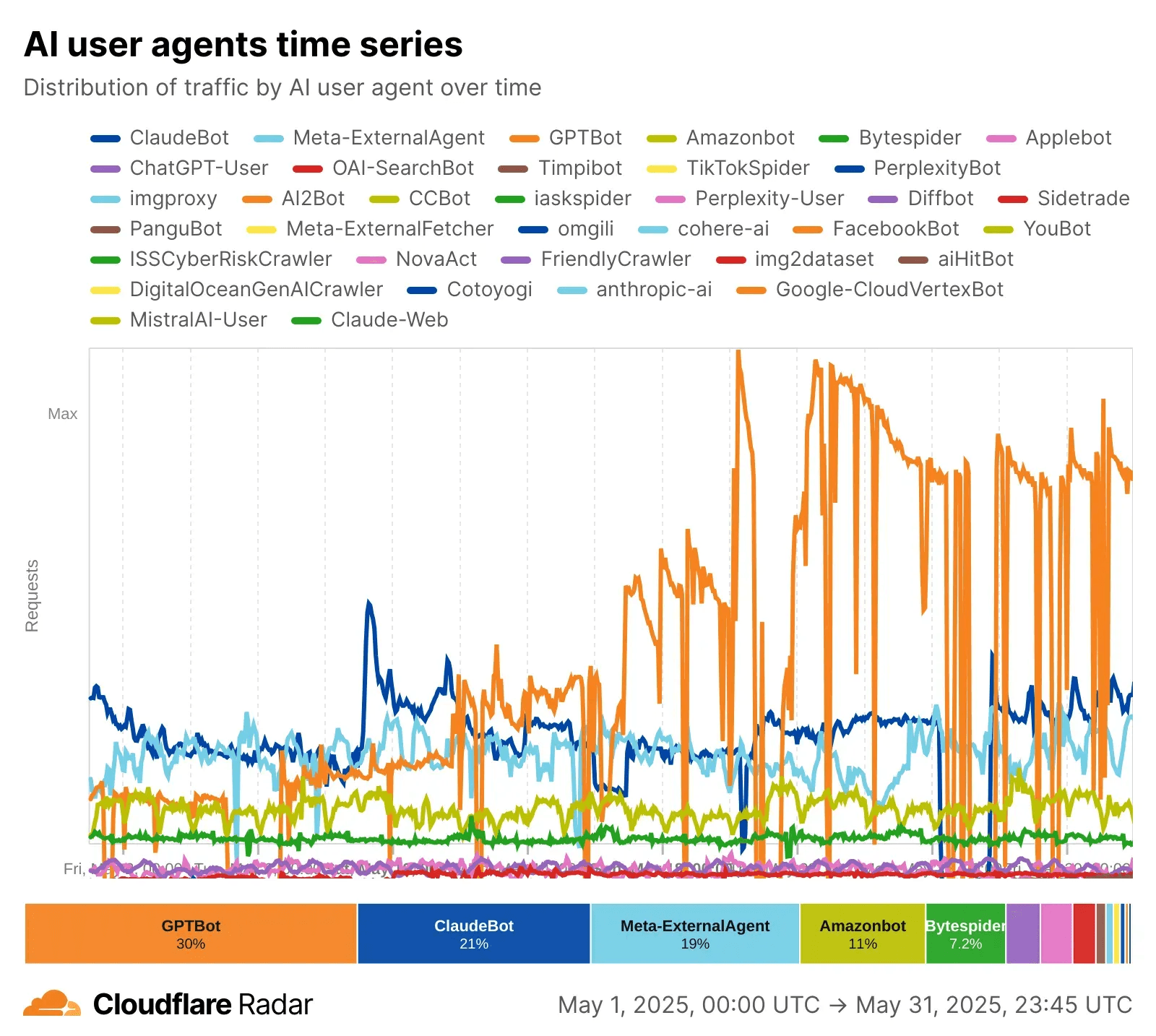

The ratio of bot hits to human visitors has sharply increased. Cloudflare data shows PerplexityBot's activity climbed by over 250% in 2025, while GPTBot’s traffic saw a 305% surge compared to 2024.

Bot (Owner) | Crawls/Click | AI Search Role | Citation Strength | |

|---|---|---|---|---|

GPTBot (OpenAI) | 60% | High (1,000+) | ChatGPT; trains/answers | Low–Moderate |

ClaudeBot | 3.6% | Highest | Claude; factual Q&A | Low |

Gemini (Google) | 13.5% | Low (5) | Gemini AI; search/answers | Highest |

PerplexityBot | 6.6% | Medium (195) | Perplexity; answer engine | High |

Googlebot/Gemini is the best for ongoing search referral and (still) sends the most users/citations

PerplexityBot is best if you want to be cited in direct answers

GPTBot/ClaudeBot are vital for training, but don’t send much search traffic

robots.txt Configuration

This is the first file that the AI crawlers check. Any misconfiguration here directly blocks AI from accessing the website content. Some misconfigurations that can reduce your AI visibility drastically:

Forgetting to allow GPTBot, Bingbot (used by ChatGPT and others), PerplexityBot, etc., to crawl your website. It’s important to double check access.

Accidentally disallowing key directories which includes important content

Blocking XML sitemaps. This leads to bots missing critical pages

Not updating for new AI bots. You should add them by name

User-agent: *with allow statements as they may be blocked by default

Recommended configuration:

Sitemaps and site structure

Your sitemaps act as roadmaps for AI bots by telling them which pages matter the most (the ones you want them to cite in their answer) and how often they’re updated.

Recommended practices for an AI-optimized sitemap:

Assign

<priority>to your pages to indicate which pages are important (e.g. FAQs, core articles, product pages)Indicate last modified date using

<lastmod>. This is super critical as AI prefers fresh content.A complimentary tag to include alongside

<lastmod>is<changefreq>to tell the bot how frequently this content changes

The arrangement of your pages, menus and links should be easy to understand without any hidden pathways. For example, your navigation menu should reflect the main site topics and the sub-topics should be connected with parent topics. Users and bots should reach any key page within a few clicks (ideally ≤3).

Few of the critical errors you should be mindful about:

Check your site for broken links which leads the bots to missing or deleted pages.

Validate link routes to avoid redirect loops.

Check server errors that hinder pages to load.

Using a diagnostic tool like Screaming Frog could be helpful here.

Pre-rendering and renderability

Most AI crawlers have severe limitations when processing JavaScript. Key things you should be mindful about when working with JS heavy website:

AI bots like GPTBot, PerplexityBot and ClaudeBot typically execute minimal or no JS.

They have a strict timeout (5-10 seconds)

Cannot process complex client-side routing

Fail with lazy-loaded content.

Static site generation

Static site generation is one of the recommended methods for optimal SEO and AEO. In this method, all the HTML pages for your website are created ahead of time, during the build process. This means we don’t wait for the user to visit the website. When a user visits, it serves a pre-built, ready-to-go HTML file.

Pre-rendering for bots

Another effective method is to pre-render website content for bots. We can generate and cache static HTML snapshots for bots and serve interactive versions to users. The typical process of how it works is:

Bot requests page

Middleware detects bot user-agent

Server checks if cached snapshot exists

If yes: serve cached HTML

If no: render page, cache HTML, serve to bot

Human users receive normal JavaScript app

Quick crawlers tests

View Page Source: Right-click → View Source. Is your content there? If not, it's JS-dependent.

Disable JavaScript: Chrome DevTools → Settings → Disable JavaScript. Can you see content?

Screaming Frog Crawl: Set rendering to "Text Only" mode. What does the crawler see

Google URL Inspection: Compare "Raw HTML" vs "Rendered HTML" tabs

AI Crawler Simulation: Use curl to fetch your page:

Benchmark metrics (source)

Time to First Byte (TTFB): <200ms ideal, <500ms acceptable

First Contentful Paint (FCP): <1.5s

Largest Contentful Paint (LCP): <2.5s

Schema and structured data

Schema markup is a piece of code that comprises of your website’s structured data. This is added as custom HTML in your website’s code. When LLMs crawl your website, it helps the bots to read website content as well as guide them to the right information.

Term | What It Is | Role | Example |

|---|---|---|---|

Structured Data | Formatted, machine-readable info | Describes your content | `"name": "Jane Smith", "jobTitle": "Data Scientist"` |

Schema | The vocabulary/framework | Labels & structures facts | `"@type": "Person", "@context": "https://schema.org"` |

JSON-LD implementation

The most common way to define the schema is using the JSON-LD (JavaScript Object Notation for Linked Data) format.

There are three mandatory elements

@context⇒ This defines the vocabulary we’re going to use for the entire schema. The most commonly used is Schema.org@type⇒ specifies the type of entity (Article, Product, Organisation, Person, etc)Properties⇒ these are the descriptive attributes that we use to define the entity. In the below example“headline”and“description”are properties

Different types of schemas

Article schema for all blog/content pages

headline: Title for snippet creation and knowledge card extraction.description: Used for summarizing and outlining answer context.author: Signals E-E-A-T (expertise, authority, trust); helps AI cite the right person.datePublished: Allows AI to reference the timeliness of content.mainEntityOfPage: Identifies the canonical source page for citation.

FAQPage schema

mainEntity: Directly represents FAQs for extraction and citation.Question: AI matches user queries with these explicit Q&A entries.acceptedAnswer: Lets AI pull precise, authoritative answers from your own content.

HowTo schema on instructional content

HowTo schema is defined specifically for guide type content. This is one of the content types that get cited by the AI very often alongside FAQs. If you want to read more about what content gets cited, you can read The Content Writing Playbook That Helped Our Customers 3x Their Visibility in AI Search

name/description: Define the task and context for AI to match step-by-step results.step: Gives AI actionable steps for answer extraction or voice synthesis.image: Enables visual answer cards or media citations in search/AI results.

Speakable schema on key content sections

Speakable schema tells voice assistants and AI readers which portions of your content should be read aloud. This is critical as voice search and AI audio responses grow.

@type: Typically anArticle,WebPage, or other content type.headline: Used for voice summary and AI answer card context.speakable: Tells voice assistants and AI which parts of your page should be read aloud or used for answer extraction.cssSelector: Specifies which sections (by CSS selector → e.g., specific headings, paragraph classes, summaries) are optimized for voice output.

Entity Mapping and Knowledge Graph

For AI to build holistic understanding, the goal is to create a comprehensive knowledge graph for your entire domain.

Pre-requisites for building a knowledge graph

Defining site entities

Audit your content to identify all major entities and assign canonical URLs that would later get linked with sub-topics. Some of the major entities could be:

Your brand/organization

Products and services

People (authors, executives, experts)

Locations (offices, service areas)

Concepts and topics (key subject matter areas)

Creating canonical URLs

Having canonical URLs is critical to help AI understand the graph semantically and for you to understand how your content connects with each entity.

Below is a example for setting up URLs for identified entities:

https://yoursite.com/about/company (Organization)

https://yoursite.com/products/seo-tool (Product)

https://yoursite.com/team/jane-doe (Person)

https://yoursite.com/topics/technical-seo (Concept/Topic)

Internal knowledge graph

Implement @id references and use the same @id for each entity

The

@idproperty is where schema transcends basic markup and becomes a knowledge graph. Every entity should have a unique identifier that AI systems can reference across your entire site and across the web.

Multi-entity mapping

AI answer engines excel at understanding complex relationships.

The

@graphproperty allows you to include multiple, related schema objects in a single block.In the below example → we use @graph to connect different types of schemas together.

Semantic triples

Semantic triples represent the atomic unit of knowledge in the semantic web: Subject → Predicate → Object. This structure is how AI systems internally represent all information. (source). Using semantic triples helps us establish a more effective relationship between different entities.

To explain in simpler terms for what each of these parts mean:

Subject: The entity being described (e.g., "OpenAI")

Predicate: The relationship or property (e.g., "develops")

Object: The value or related entity (e.g., "ChatGPT")

See a product entity with relationships example. To understand this in practice, see how each property-value pair here creates a semantic triple:

<AI SEO Optimizer Pro> <manufacturer> <TechSEO Solutions><AI SEO Optimizer Pro> <price> <299.00><AI SEO Optimizer Pro> <isRelatedTo> <Content Optimizer>

Internal Linking

Traditional SEO focused on keyword based anchoring which doesn’t work for AI visibility. For AEO, we need entity-rich anchors that define relationships between different content pieces.

Topic cluster

Think of topic cluster as a tree → you have the main trunk and the branches. In order for AI to build robust understanding of your website semantically, you need to think about your content in terms of a forest with multiple trees.

We have a pillar page (the trunk), which is a comprehensive guide covering a broad topic (3000-5000+ words). We then have cluster pages (branches) which are in-depth articles on specific subtopics (1500-2500+ words). To connect all of these together we implement bidirectional links - pillar links to all clusters and clusters link back to pillar and to each other.

URLs and Links

Having the right URL structure plays a huge role in helping AI make sense of your website content for semantic understanding.

Best Practices:

Use descriptive, hierarchical URLs

Keep URL depth to 3-4 levels maximum

Include primary keyword in URL

Use hyphens (not underscores) to separate words

Closing Thoughts & Next Steps for you

Building for AI search is not just about visibility. It is about clarity. When your website is semantically structured, technically sound, and entity linked, AI systems understand who you are and why they should trust you.

Here is what you can do right now to strengthen your foundation:

Start log file analysis to track AI bots and measure citation or referral rates.

Check your robots.txt for any accidental blocks. Allow key AI bots like GPTBot, ClaudeBot, Gemini, and PerplexityBot. Also, test your XML sitemaps for proper discoverability.

Pre render or enable server side rendering (SSR) for JavaScript heavy sites. Verify that your content appears in the HTML source and shows up in text only crawls using Screaming Frog.

Implement JSON LD schema across all important pages. Go beyond just Article and FAQ. Add HowTo, Speakable, Product, and rich entity linking with

@idandsameAsattributes.Clarify your content hierarchy. Ensure every valuable page is internally linked. Fix broken links, redirect loops, and orphaned content.

Map and connect your entities. Identify your key people, products, and concepts, and link them to trusted external authorities like Wikipedia or Wikidata.

By taking these actions, you help AI crawlers build a clear and trustworthy understanding of your brand. This increases your chances of being referenced, cited, and featured in AI generated answers.

Want to optimize for AI visibility? Click here to find out how we help our customers get found in AI search.

Frequently Asked Questions (FAQs)

1. What is technical SEO for AI search engines?

Technical SEO for AI search engines focuses on optimizing your website so AI crawlers like GPTBot, PerplexityBot, and Gemini can understand, index, and cite your content accurately. It goes beyond keyword optimization and ensures your site’s structure, schema, and entity mapping are AI-friendly.

2. How is technical SEO for AI different from traditional SEO?

Traditional SEO focuses on optimizing for keyword rankings in Google SERPs, while AI SEO ensures your content is machine-readable, semantically rich, and trusted by AI models. It emphasizes schema markup, knowledge graphs, and structured data to help LLMs extract and connect factual information from your site.

3. Which AI bots should I allow in my robots.txt?

You should allow major AI crawlers like GPTBot (OpenAI), ClaudeBot (Anthropic), PerplexityBot (Perplexity), Google-Extended (Gemini), CCBot (Common Crawl), and FacebookBot (Meta) to ensure your site content is accessible and referenced in AI-generated responses.

4. How does schema markup improve AI visibility?

Schema markup provides structured data that helps AI bots interpret your content contextually. It identifies key entities, relationships, and factual data — making it easier for AI search engines to connect your content to relevant queries and display it in answer summaries or citations.

5. What’s the best site structure for AI crawlability?

A clear, hierarchical site structure with topic clusters works best. Use pillar pages for broad topics and interlink them with cluster pages that go deeper into subtopics. Keep URLs short, descriptive, and under 3–4 levels of depth to help AI bots map your content semantically.

Similar Blogs

Get found in AI search

Ready to start?